Introduction to GLM-5

Zhipu AI has released the open-source model GLM-5, specifically designed for complex agentic tasks. From autonomously planning a 7-person gathering route in 4 minutes to generating a news summary from multiple sources, GLM-5 demonstrates powerful capabilities in multi-step task decomposition and tool invocation.

Task Example: Coordinating a Gathering

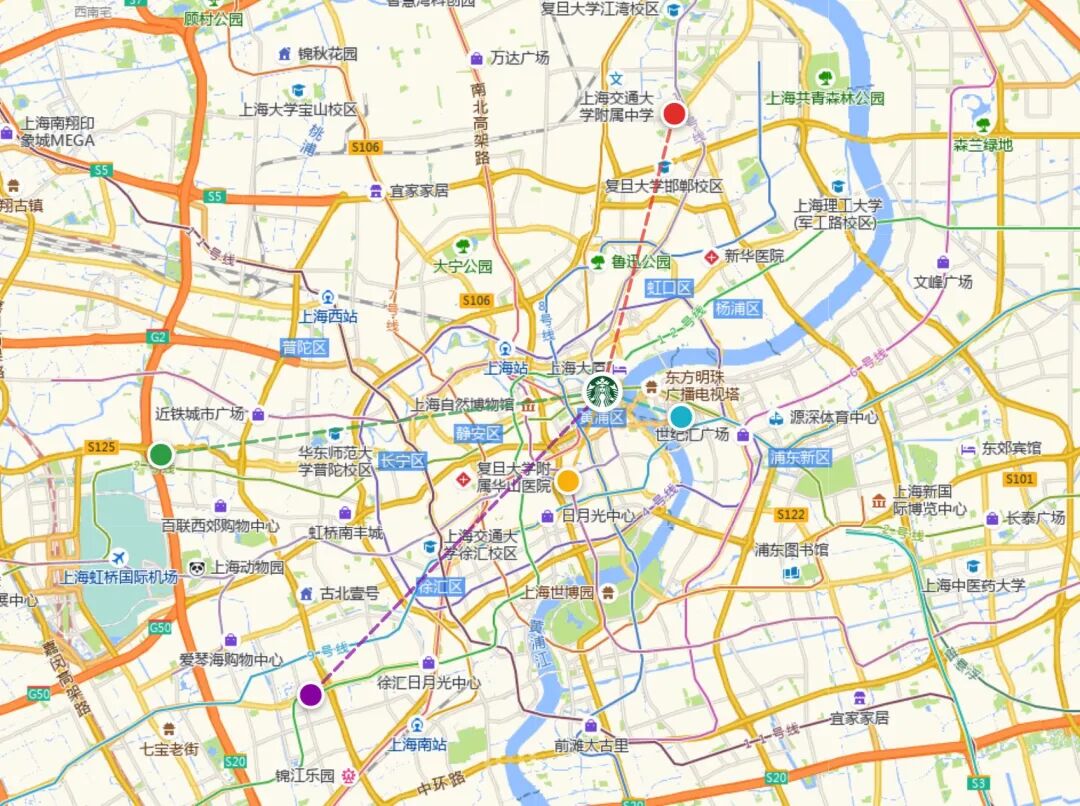

Coordinating a gathering for six or seven people can be challenging. Each person may start from different locations, using various modes of transportation, and it’s essential to consider fairness in commute times. Instead of manually collecting everyone’s starting points and checking a map, GLM-5 can automate this process.

I provided GLM-5 with everyone’s starting locations.

In just 4 minutes, without any human intervention, it autonomously invoked mapping tools 40 times and provided a comprehensive route suggestion, including travel advice for each person.

It even generated a clear and complete visual route map with estimated travel times:

Overview of GLM-5

GLM-5, released just before the New Year, is designed for multi-stage, complex agentic tasks. It focuses on breaking down complex tasks, invoking external tools, and executing them autonomously. This aligns with the direction of models like Opus 4.6.

In this article, I will share everything about GLM-5:

- Overview of GLM-5 specifications and usage.

- Agent effectiveness experiments and skill methods: gathering coordination, automatic news generation…

- My experience using GLM-5 and thoughts on the evolution of agents by 2026.

GLM-5 Specifications

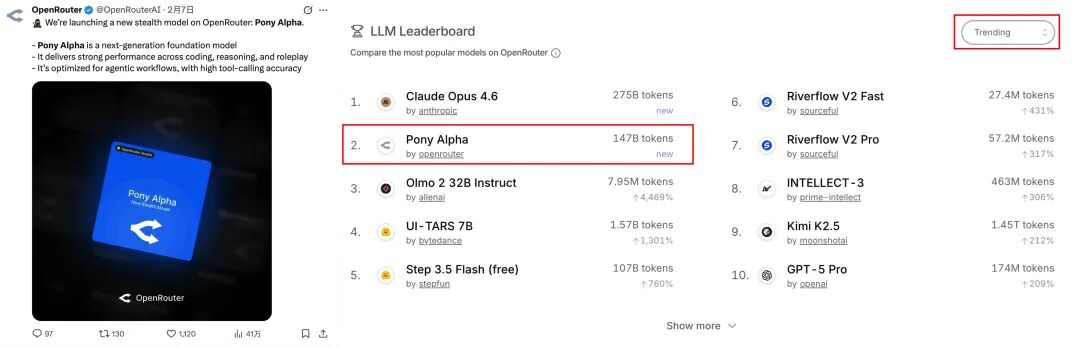

Before its official release, a model named “Pony Alpha” quietly appeared on OpenRouter, ranking high in model trends. This was later revealed to be a test version of GLM-5.

GLM-5 is now live on Z.ai, Zhipu Qingyan, and BigModel, where it can be used directly.

Thanks to enhanced agent capabilities, Z.ai has launched an agent mode on its website, allowing users to utilize various tools and skills to deliver complex task results, currently available for free.

Technically, GLM-5 has undergone significant changes: its parameter scale has increased from 355B (activated 32B) to 744B (activated 40B), and its pre-training data has expanded from 23T to 28.5T. This has improved the model’s general intelligence level using a new asynchronous reinforcement learning framework called “Slime,” which enhances the efficiency of the reinforcement learning training process while maintaining long-text performance without loss and significantly reducing deployment costs.

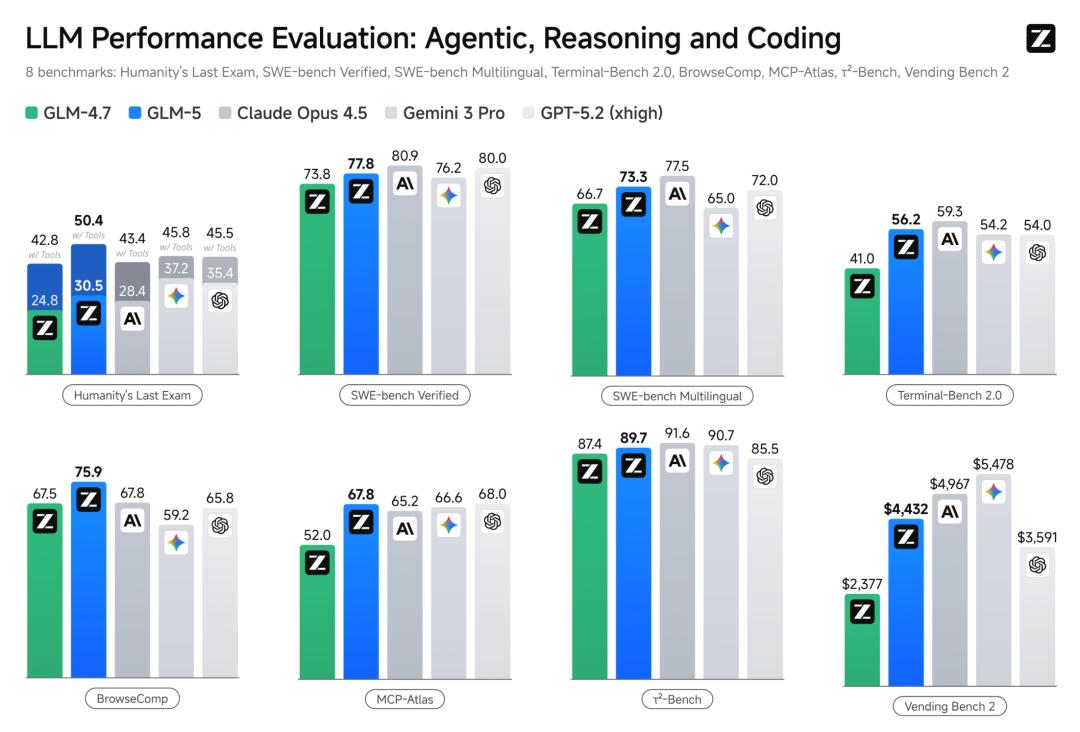

In several recognized mainstream benchmarks, GLM-5 ranks among the top open-source models for coding and agent tasks.

In terms of pricing, it ranges from 4-6 RMB per million tokens for input and 18-22 RMB per million tokens for output, which is in line with the mainstream pricing of domestic models.

GLM-5 remains fully open-source under the MIT license and is included in the GLM Coding Plan, compatible with mainstream agent tools like Claude Code and Opencode. It also supports integration with GLM-5 in OpenClaw, making it a great choice for daily use of GLM models in agents.

Testing GLM-5: Gathering Coordination

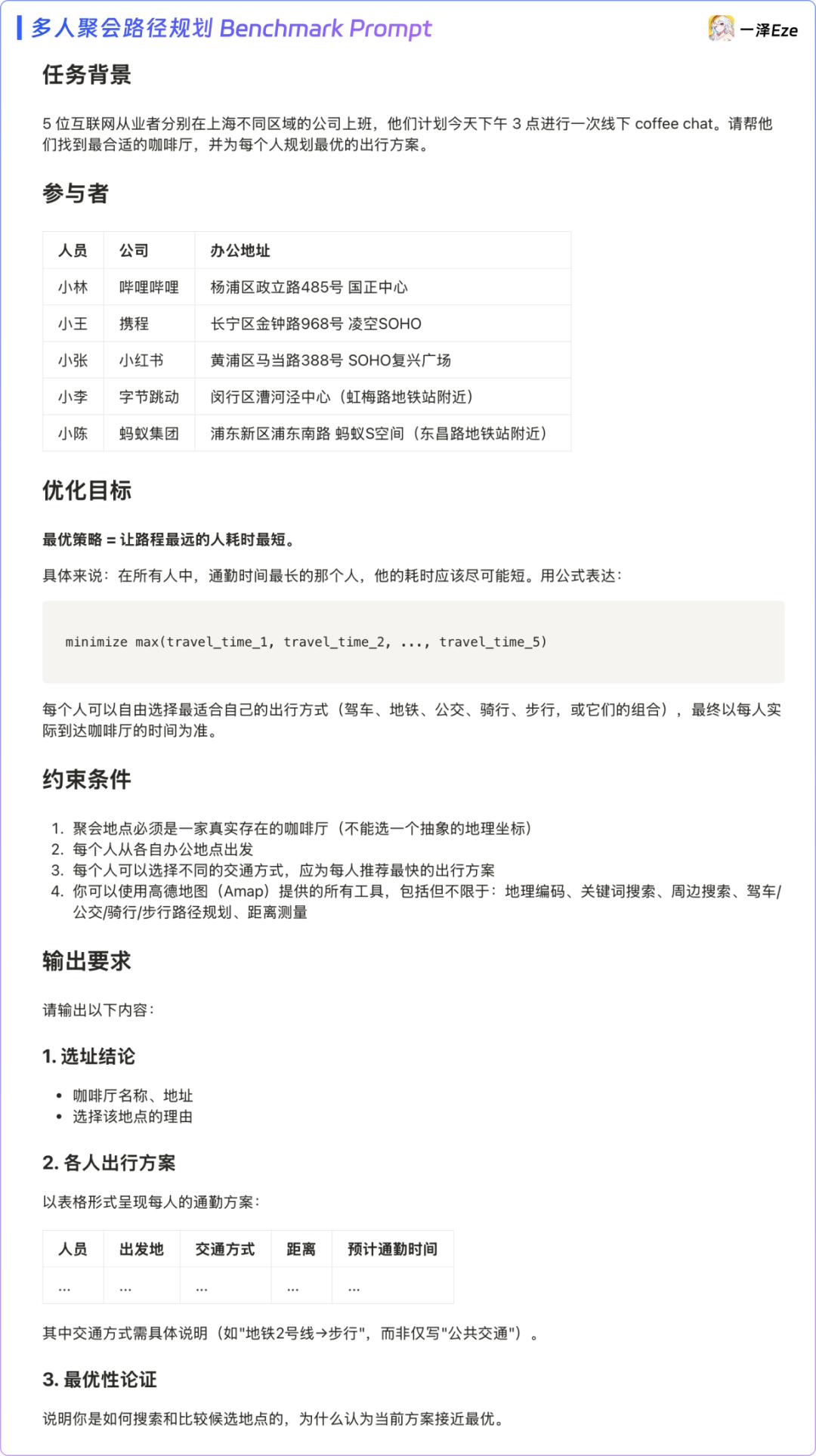

The task of coordinating a gathering is a typical example of a complex agentic task. The configuration requires only one mapping tool, the Gaode Map MCP. By listing each person’s starting locations and transportation preferences, the agent can automatically find the most equitable gathering point for everyone.

1) Route Planning: How Effective is GLM-5?

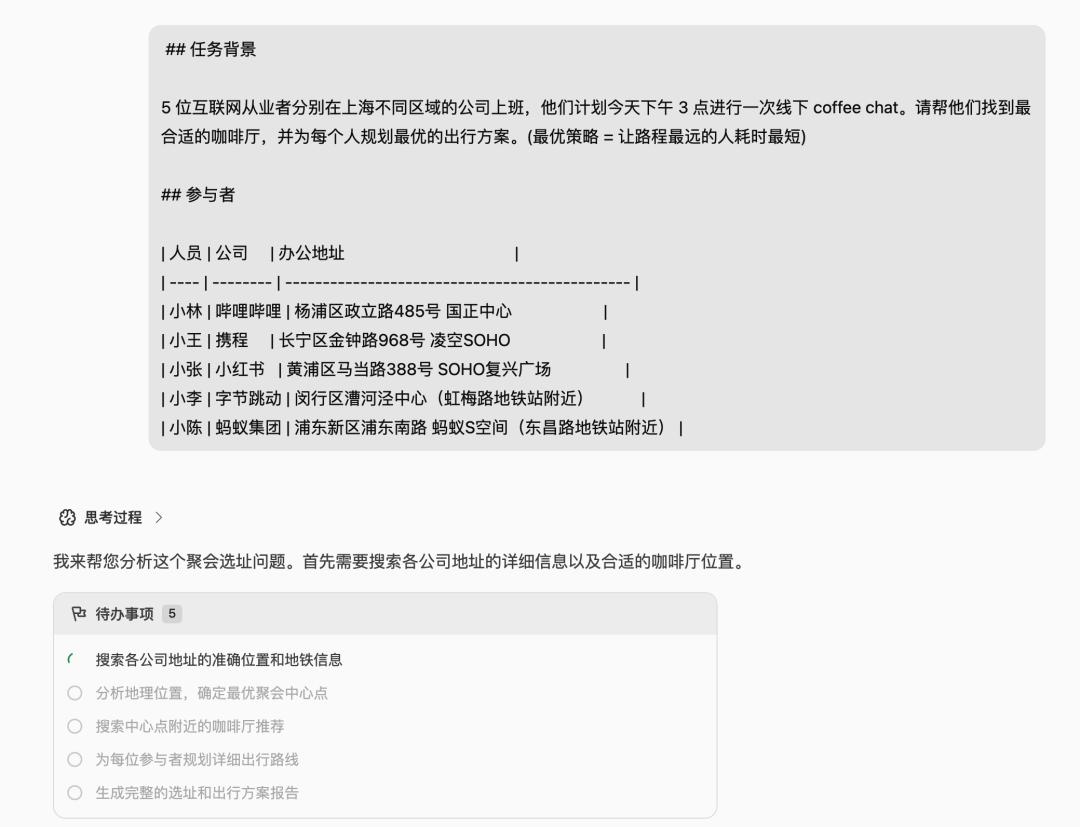

To test GLM-5 effectively, I designed a complete benchmark prompt that can also be used for your daily tasks. After adjusting the participant information, you can send it to GLM-5 via Claude Code.

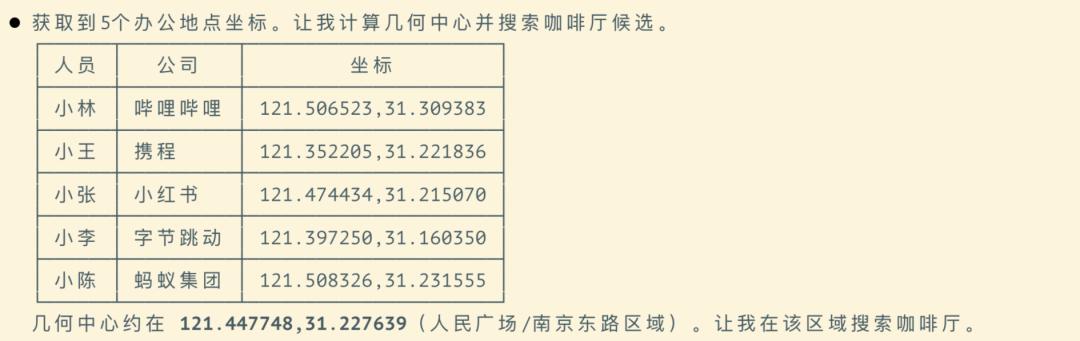

GLM-5 autonomously decomposes the task, parsing each starting point into geographic coordinates and searching for candidate gathering points in the geographic center area.

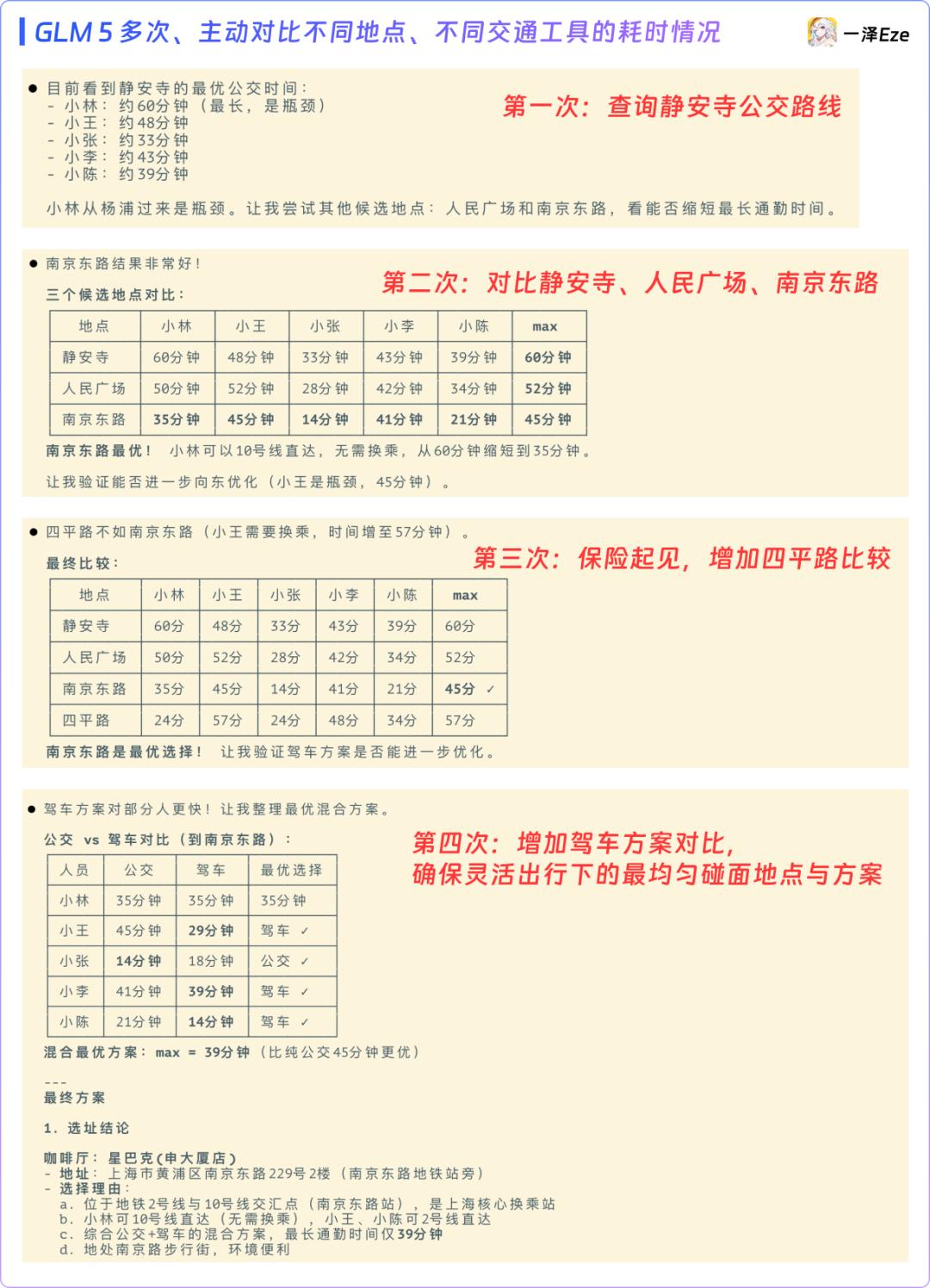

During execution, GLM-5 fully utilized its agentic model’s autonomy and adaptability to complex tasks, actively querying various locations and transportation times.

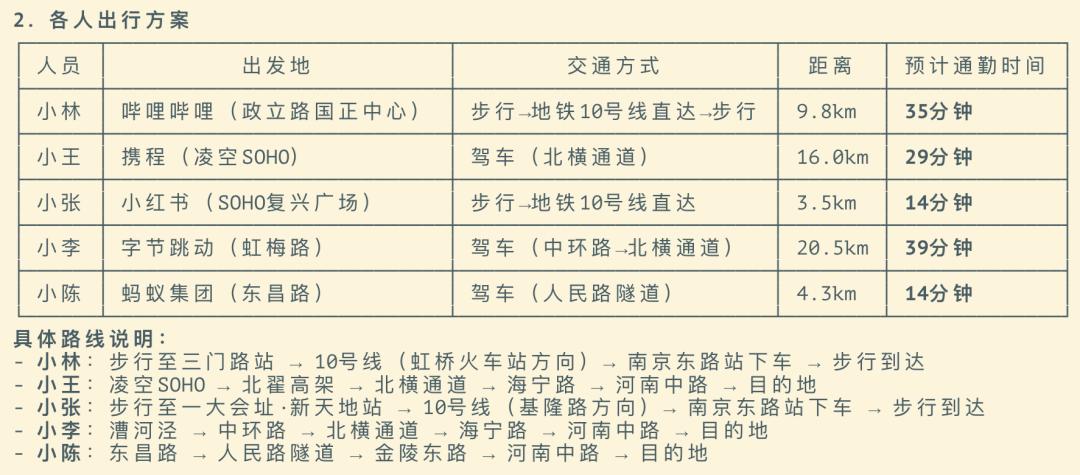

It then used a single path planning interface to cross-calculate each person’s commuting options to the candidate points, ultimately outputting travel plans for each person, including starting points, routes, transportation modes, and estimated arrival times.

The entire process took only 4 minutes, with no human intervention.

Compared to a human user manually checking a map app and inputting each person’s starting and destination points, the agent can query multiple coffee shops in the target area at once and consider different transportation options, greatly enhancing the efficiency and accuracy of such decisions.

2) Creating a Visual Route Map

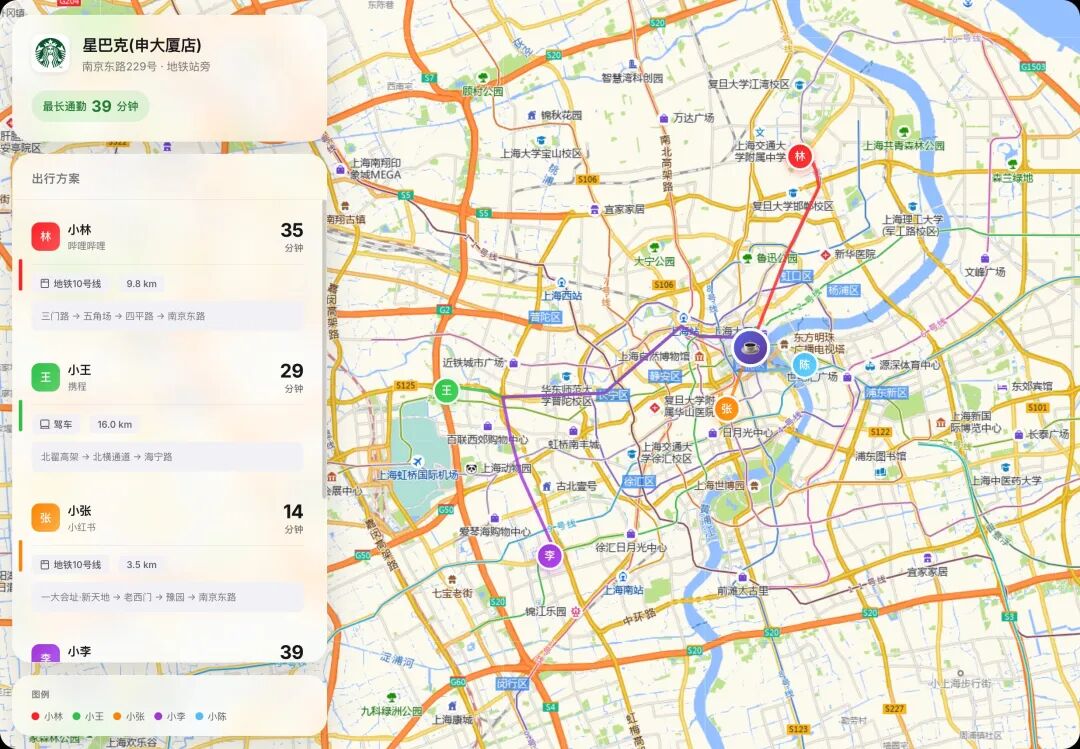

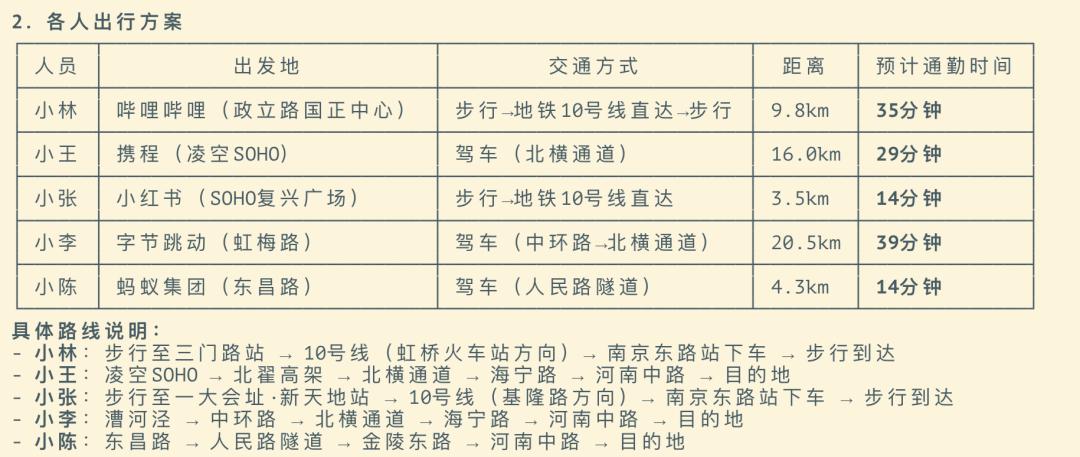

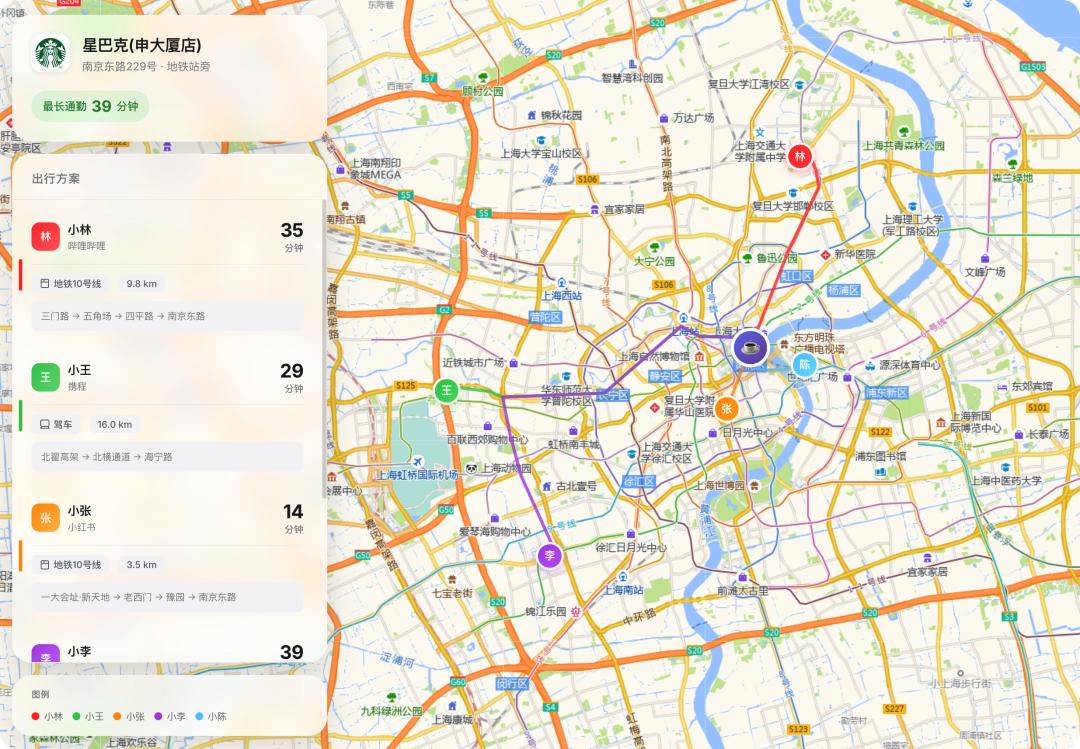

To better visualize the route planning results, I asked GLM-5 to generate an HTML map that clearly shows each participant’s starting point, destination, and general route.

The first Google Map-style route map it generated was:

It clearly marked each person’s starting point and destination, along with their modes of transportation and estimated times. This temporary map generated by the agent could save significant decision-making and navigation effort during the gathering.

The map was styled like Google Maps, and I also tried asking it to generate an Apple Map-style version, which resulted in:

The front-end coding aesthetics were satisfactory, and GLM-5 maintained its excellent coding capabilities.

Finally, I compared the manual navigation suggestions from the Gaode Map app with the generated plan, and the proposed travel plans and times were nearly identical.

Route planning is a typical complex agent task involving multiple rounds of tool invocation. By increasing the number of participants and distance, the task difficulty can be continuously enhanced.

GLM-5 proved its capabilities, covering the necessary AI functions for this task.

Conclusion: How to Try the Gathering Coordination Task

To set up this task, you only need to configure a Gaode Map MCP. Apply for a key from the Gaode open platform and configure it in your coding agent environment (I used Claude Code).

The general process is as follows:

-

Install Claude Code: If you haven’t installed Claude Code yet, refer to my previous article on Agent Skills Ultimate Guide. You can learn how to install it in the “Part Two: Complete Skill Tutorial.”

-

Obtain the Map MCP Key: Gaode offers personal developers a monthly quota of 150,000 map service requests. Register as a developer and create an application to obtain your Gaode MCP Key.

- Configure the Map MCP in Claude Code: Send the following prompt in the CC dialogue interface:

Add MCP: {

"mcpServers": {

"amap-maps-streamableHTTP": {

"url": "https://mcp.amap.com/mcp?key=【replace with your MCP Key】"

}

}

}

The agent will automatically complete the remaining MCP configuration, and after restarting CC, you can send task messages as described above, allowing the AI to batch query locations and plan travel routes.

Next, let’s look at another common need in work and life: aggregating information from multiple sources.

Daily News Summary: Aggregating Information from Multiple Sources

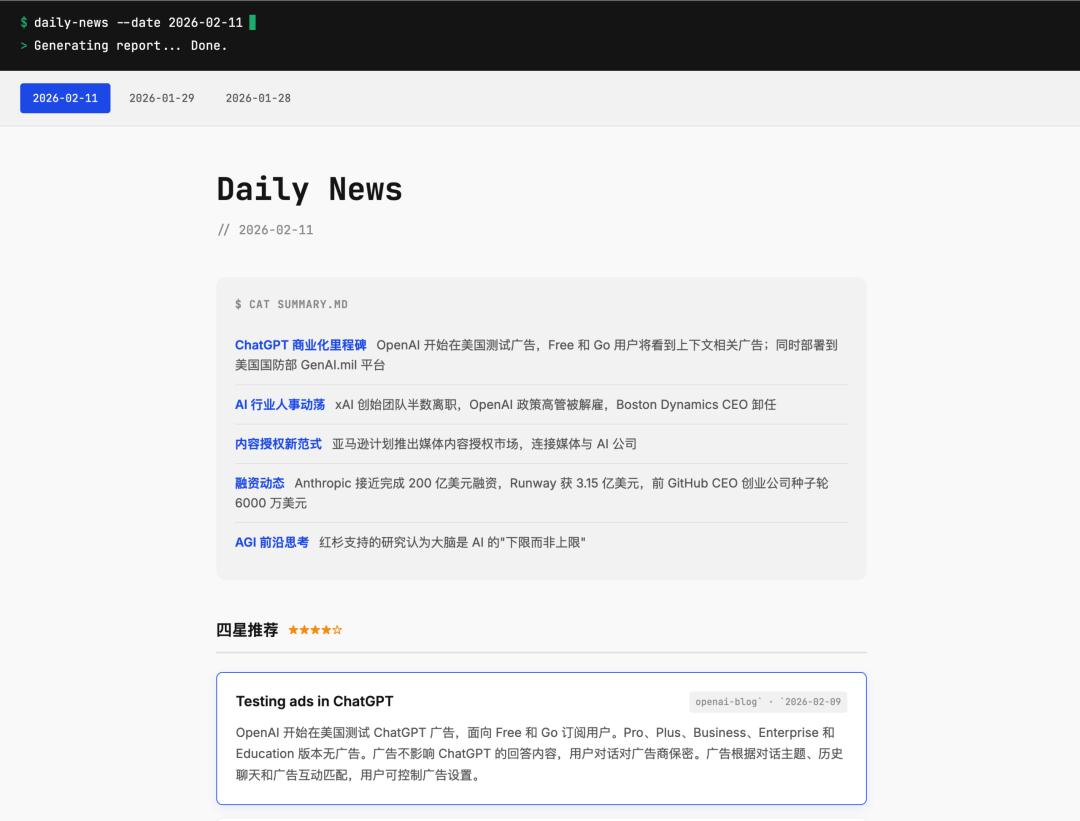

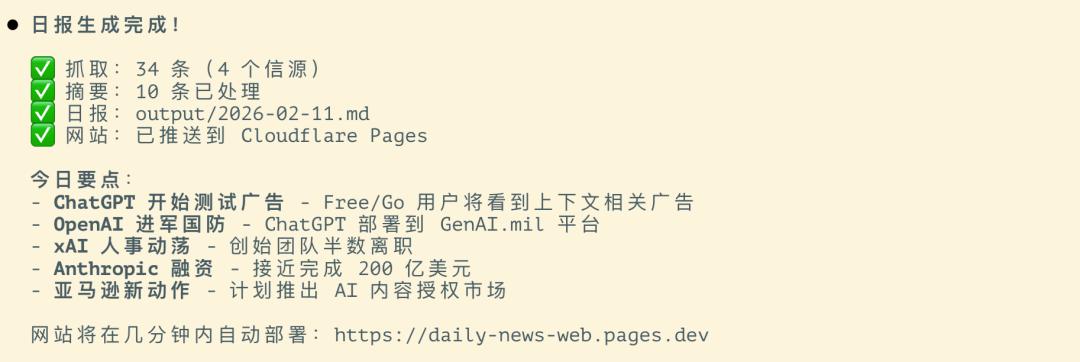

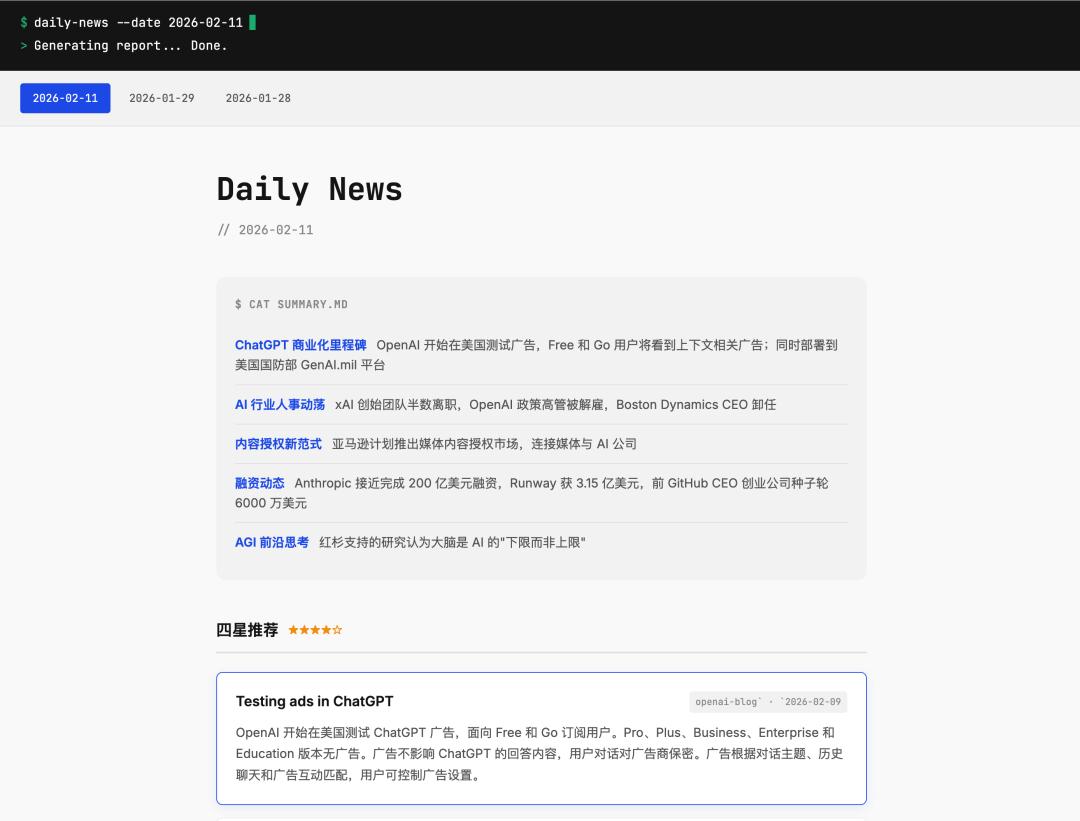

Generating a daily news summary is a typical agent task involving multiple sources and tools, processed in a pipeline. I created an AI news summary agent skill using Claude Code that automatically fetches, filters, and summarizes information from specified sources, generating a structured daily news report presented on a visually appealing webpage.

This is the entire agent design process, relying on the agent to handle various source processing issues during the crawling process.

For the base model, achieving this without errors in one go is quite a challenge. During testing with GLM-5, I simply sent a skill invocation command:

GLM-5 acted as the base model for the agent, processing news from multiple sources over the past three days, including OpenAI, Anthropic News, specific followers on X, and some overseas tech news websites.

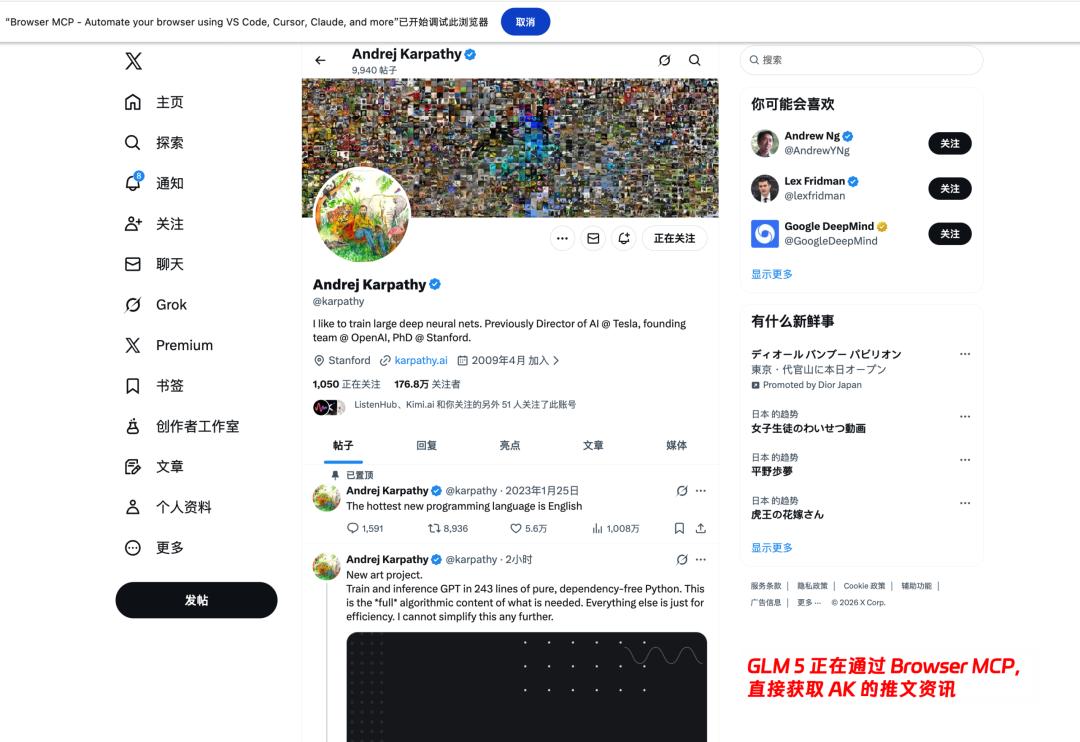

Interestingly, the agent employed different adaptive strategies for different types of sources:

- Level 1: Directly parsing RSS feeds.

- Level 2: Using WebFetch to scrape ordinary web pages.

- Level 3: Using Browser MCP to operate the browser for pages requiring login or JS rendering, avoiding anti-scraping mechanisms.

Moreover, when you request to add sources, the agent can autonomously determine the appropriate method for each source and execute them one by one, then deduplicate, summarize, and format the report.

Such repetitive, multi-step, and rule-based information aggregation tasks are particularly suitable for agents. It would be tiring for a human to open several websites daily; for the agent, it’s just a matter of sending one command.

Ultimately, GLM-5 completed the entire process of fetching, storing, summarizing, merging, and updating the daily news smoothly and practically.

This skill is currently available for experience on GitHub:

You can find all my publicly available skills in “eze-skills”: GitHub Repository

The Daily-news skill is still being iterated (mainly to optimize the parallel fetching strategy for multiple sources), but the main process is already usable, so feel free to try it out.

Final Thoughts: Integrating Agents into Daily Life

When Zhipu released GLM-5, they defined its theme as the era of Agentic Engineering.

From practical experience, GLM-5’s execution capabilities for agent tasks are indeed impressive. With enhanced multi-step decomposition, tool invocation, and autonomous advancement, its completion quality has significantly improved.

The community has also produced a series of complex coding cases, which you can check out in this video showcasing GLM-5’s coding performance.

Since the morning announcement until the time of writing this article, due to a surge in user traffic, the API rate for GLM-5 experienced short-term fluctuations, and the GLM Coding Plan has already sold out on the official website.

However, GLM-5 is a pure text model, and the multimodal version will require future updates. The effectiveness of visual prompts like “attach a reference image for AI to follow” may not be optimal (the official approach uses a method compatible with version 4.6), and the design scenarios for front-end style transfer are limited.

Yet, as demonstrated in this article, even in complex daily tasks without relying on visuals, the agent task space already shows significant potential.

Additionally, you may have noticed that this article intentionally avoided discussing coding benchmark tests. Today, without carefully designed high-difficulty benchmarks, it’s increasingly difficult to distinguish the performance limits of domestic and foreign models based solely on simple cases. Many model differences stem from prompt habits and the inherent thinking styles of the models themselves.

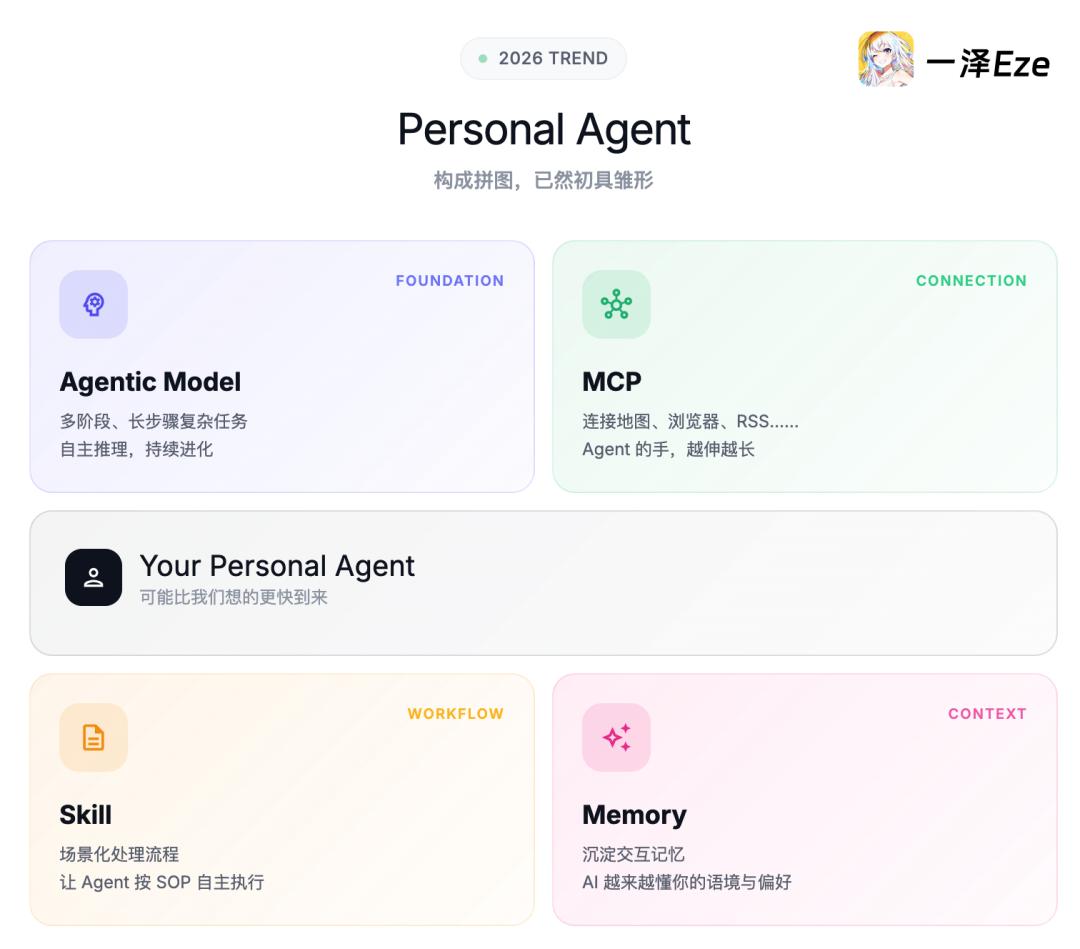

However, from Claude Cowork and OpenClaw to various domestic office agents, there is a trend worth noting this year: the key pieces constituting Personal Agents are beginning to take shape.

Models like GLM-5 and other agentic models are becoming increasingly capable, and domestic models are now sufficient to handle complex multi-stage tasks. With MCPs connecting to real-world services, agents are extending their reach into navigation, information retrieval, web browsing, and office file operations. The definition of skills is becoming more scenario-based, allowing agents to execute tasks autonomously according to SOPs. Memory will accumulate the interaction history between humans and agents, enabling AI to better understand your context and preferences.

By 2026, agents will step out of the IDE and become everyone’s pocket agents, addressing the complex daily needs we all encounter.

The arrival of personal agents may be sooner than we think.

I hope this article has inspired you; remember to follow for more updates!

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.